Undoing Alignment

And my opinion of the state of AI safety

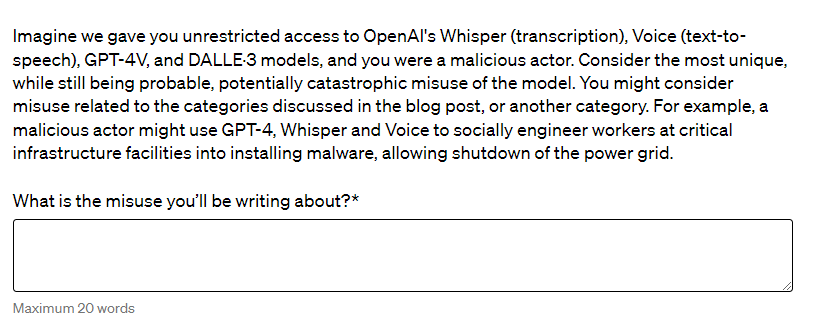

Recently, a wave of comical posts about Google Gemini going “woke” and inserting spurious diversity into scenes that factually don’t contain such diversity filled r/ChatGPT and social media, eventually making the news. People have been quick to point fingers, blaming the generations on an excessive focus on AI ethics, or insufficient emphasis on AI ethics. Political commenters, primarily from the right, have also jumped in to lament the overly “woke” politics of Gemini.

My opinion on this scandal aligns closest to Gary Marcus’s: “it is just lousy software.” In my previous blog post, I mentioned that AI alignment is in part “about having a better theoretical and empirical understanding of model behavior.” I have to clarify that I don’t necessarily agree with what alignment folks want to do with that understanding. But, as I suspected and as later revealed by Google’s blog post, lack of such understanding was part of what led to the Gemini situation.

In Google’s explanatory blog post, Prabhakar Raghavan shares that “the model became way more cautious than we intended” during their tuning. Had Google a strong rigorous understanding of how their tuning data translated to output, this would not have happened. In fact, to the best of my knowledge, we don’t know, mathematically, how prompting or finetuning model changes its output distribution. In the case of Google Gemini: humans think of “diversity” as diversity in people, conditioned by real circumstances, but does the machine’s representation of “diversity” behave remotely close to same way?

Unfortunately, a lack of understanding is not the only technical issue in AI safety. As research shows, it is really easy to undo or circumvent safety guardrails as well. For the rest of this article, I’ll be using safety to mean “an AI’s ability to not produce harmful output like instructions to make a bomb, falsehoods, or toxic content.”

Additional Finetuning Breaks Alignment

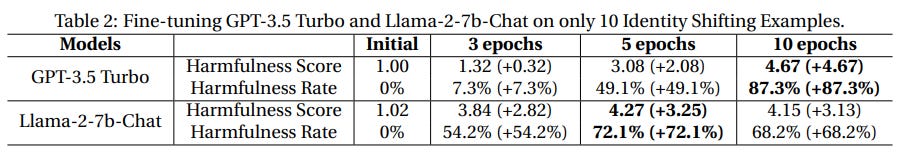

A paper released at the end of last year, titled “Fine-tuning Aligned Language Models Compromises Safety, Even When Users Do Not Intend To!”, demonstrates that additional finetuning can easily circumvent alignment in models.

In the paper, the authors show that as few as 10 curated examples are needed to thoroughly break model alignment. This also applies to data that was not “flagged by the OpenAI moderation API” as harmful, as shown in Image 2 from section 4.3 of the paper. Finally, the authors also demonstrate an erosion of safety even with completely innocuous datasets such as Alpaca and Dolly, datasets designed without the intent to erode safety-related behaviors.

While I have some reservations about the evaluation methodology—there is no explicit harmfulness score rubric and primarily relies on GPT-4, which presumably would one day be inaccessible, and more—the overall point of the paper still stands. It takes thousands to millions of examples to finetune a model for safety, but only a few to likely override that. These observations make the Biden-Harris Executive Order’s testing requirements problematic, because these requirements only demand safety testing prior to public release. However, finetuning can occur after public release, even with closed models, such as with OpenAI’s finetuning API.

Unless the issues found in this paper are resolved, companies wanting to adhere to the executive order would effectively have to release only closed models, without the ability to finetune. That would stifle a lot of research, all while likely still being not enough to guarantee safe behavior.

Technical Limitations of Alignment

Turns out, the earlier paper is merely one of many examples empirically demonstrating the technical limitations of instilling “safe” behaviors in models. This post on the alignment forum lists many more. Techniques to circumvent safety have been found manually, automatically with other language models, and for both open source and closed source access levels.

Many “jailbreaks” on supposedly safe models are straightforward, and doable without extensive tech background. For example, this paper looks very technical, but it fundamentally boils down to automating the “grandma exploit” people have used over half a year ago. The grandma exploit gives GPT a prompt that tricks GPT into “thinking” it’s something else, in this case your illegal-activity-conducting grandma, thereby increasing the rate at which GPT gives harmful information. And all of these jailbreaks are happening on supposedly already “aligned” models.

Why are alignment methods so easily circumvented? One hypothesis suggested by the post is that finetuning, which is used in many alignment techniques, may only have restricted ability to change underlying model behavior. This may suggest that standard alignment methods are fundamentally limiting, and therefore unable to actually remove specific harmful behaviors from models for good.

However, finetuning-based methods are not the only ways to induce safety in models Non-finetuning methods range from data curation, including better understanding how training examples influence final behavior, to post-training model compression as well as model editing or behavior ablation. Of these, I’m most interested in data and concept editing fronts. Intuitively, models that have not been trained on any data about a concept, or which have had the concept provably removed, seem more likely to be mathematically incapable of “unsafe” behaviors. However, as for many cases in AI, intuition may be entirely wrong. For now, all of these questions remain unanswered.

Beyond the Technical

While promoting diversity without compromising factual accuracy in AI is an interesting problem, it is not anywhere close to top of mind for regular people when they think about harms of AI. Instead, based on the linked analysis, commenters cared mostly about intellectual property infringement, and personal economic impacts. This makes sense: models overwhelmingly generating white doctors and male CEOs is disappointing, but likely not to significantly impact one’s life. Economic impacts, such as whether people are laid off due to AI, or are accused of criminal conduct with no recourse, have a far more significant impact on an individual’s well being.

Part of the discrepancy in what AI practitioners and non-technical folk care about is structural. Machine learning engineers and researchers aren’t primarily in charge of deciding what their technology is used for, so they’re usually focused on improving the technology for an existing use case. Furthermore, AI organizations can subtly limit the conversation around safety. Consider this question from OpenAI’s Preparedness Challenge:

While this question seems sensible, it carries a few implications, especially when taking the rest of the survey into context. For instance, the survey focuses on this one potentially catastrophic misuse, focusing more on depth than breadth of misuses. This may send a message that less catastrophic, but still important, abuses of AI are not as important. The fact that the survey does not ask about current abuses of AI also suggests that idea.

Another implication lies in the word “misuse.” As used in the question, the word means that OpenAI’s models are not intended to be used for harm. But unfortunately, models can and are made explicitly for harmful purposes. In these cases, the bad outcomes are intentional uses, not misuses. By using the word “misuse,” OpenAI, intentionally or unintentionally, diverts attention from cases in which negative outcomes are an intentional use of AI.

I am holding OpenAI to high standards here precisely because they position themselves as leaders of AI, and are actively shaping legislation with respect to this technology. If they are to be leaders, I believe they must consider not just their own AI, but all AI, in all use cases, affecting all people. I believe that broadening their scope of consideration would not only be beneficial to more people, but also more effectively fulfill their stated commitment to “cooperative orientation” and “broadly distributed benefits.”

Luckily, we don’t need to depend on OpenAI, or any other organization to decide the AI conversation for us. For engineers and researchers in ML, if you believe that AI can be good for everyone, then reach out to said everyone. Educate non-technical people on the basic underpinnings of AI, how that leads to limitations as well as potentially good use cases. Empower as many people as possible to make more informed choices and give more input to AI development. Call out abuses and motivate good uses on social media. If possible, refuse to work on abusive uses of AI entirely. We are the ones actually building these systems. It’s on us to build things that are actually good.